|

Authentic online learning: Aligning learner needs, pedagogy and technology

Jenni Parker, Dorit Maor and Jan Herrington

Murdoch University

Over the past few decades there has been a substantial swing among higher education practitioners towards a more constructivist approach to learning. Nevertheless, it is still evident that many instructivist models are widely used in both classroom and online learning environments. A key challenge for educators is linking learner needs, pedagogy and technology in order to construct more interactive, engaging and student-centred environments that promote 21st century skills and encourage self-directed learning. Existing research suggests that the use of real-life tasks supported by new technologies, together with access to the vast array of open educational resources on the Internet, have the potential to improve the quality of online learning. This article describes how an authentic online professional development course for higher education practitioners was designed and implemented using a learning management system (LMS) and an open companion website. It then discusses how the initial iteration of the course was evaluated and provides recommendations for improving the second iteration. Finally it describes how the second iteration was modified and implemented.

A design-based research approach was employed to explore possible solutions for designing and implementing effective online higher education courses, based on a social constructivist model of learning (cf. Parker, 2011). Design based research, like action research, is accomplished at the coal face, however, it involves an ongoing iterative process to monitor the effectiveness of a specifically designed artifact (Kelly, 2006) involving successive implementations of a learning solution. Key elements of this approach include: addressing complex problems in collaboration with practitioners, integrating design principles with new technologies to develop practical solutions to the problem, and conducting effectiveness evaluations to refine the proposed solution and identify new design principles (Reeves, 2006).

A review of existing research and informal discussions with higher education practitioners suggested teachers needed to experience new learning environments as learners themselves in order to implement changes to their teaching approach (Maor, 1999). Therefore, one potential innovative solution for changing existing online teaching practices was to develop an online course based on authentic learning principles, where university professionals were immersed in the pedagogical environment (cf. Parker, 2011).

In this paper, we describe how an online professional development course for higher education practitioners based on authentic learning principles (Herrington, Reeves & Oliver, 2010) was designed and implemented. It discusses student and facilitator reflections about the effectiveness of the first implementation of the course, and presents recommendations for improving the effectiveness of the design approach. Finally, it discusses how the recommendations were incorporated in the second implementation of the course.

The designed solution was implemented and two iterations were conducted with the target group (higher education practitioners) over a period of eight months. Participant feedback and facilitator reflections from the first iteration of the course were analysed to identify areas for improving the second iteration. Recommendations for improving the course were implemented prior to conducting the second iteration.

Qualitative methods were used to allow detailed information to be collected from participants about their experience with the authentic learning environment and tasks. The major data collection methods were: a participant background survey (before the course), a participant teacher perspective survey (after the course), participant artefacts and comments made during the normal progression of the course, and facilitator reflections and an anonymous online course evaluation questionnaire completed by participants at the end of the course.

Data, transcripts of interviews, researcher notes and other documentary evidence was coded and analysed using Glaser and Strauss's (1967) constant comparative method of qualitative analysis. This joint coding and analysis method enabled data to be systematically categorised and analysed using standardised measures so that participant responses could be grouped into relevant themes to facilitate comparison and analysis.

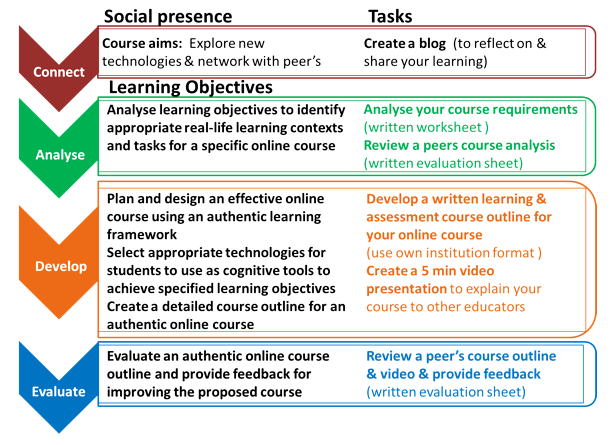

Herrington et al.'s authentic learning design framework (2010, p. 128) was extended to include learning objectives and identify components of the course that need to be situated within a protected environment (for reasons of confidentiality). This extended framework provided overall guidance for the design and implementation of the course (see Figure 1), and was also used as a support resource to assist participants to design their own online course. Herrington et al.'s elements of authentic learning (2010, p. 18) and elements of authentic tasks (2010, pp. 46-48) were used to ensure the course and task design adhered to authentic learning principles.

Figure 1: The extended authentic learning design framework

(based on Herrington et al, 2010)

The course was designed to meet five learning objectives and an overall complex task was developed to enable participants to demonstrate the use of higher level cognitive skills to achieve the learning objectives. The overall task required participants to plan an authentic online course for their specific area of teaching in higher education, create a detailed course outline, and present a video overview of the course to their colleagues. Figure 2 explains the relationship between the learning objectives and the course tasks. Specific task requirements were outlined in the course guide and example documents, readings and tutorials were used to guide the learning.

Figure 2: Relationship between learning objectives and tasks

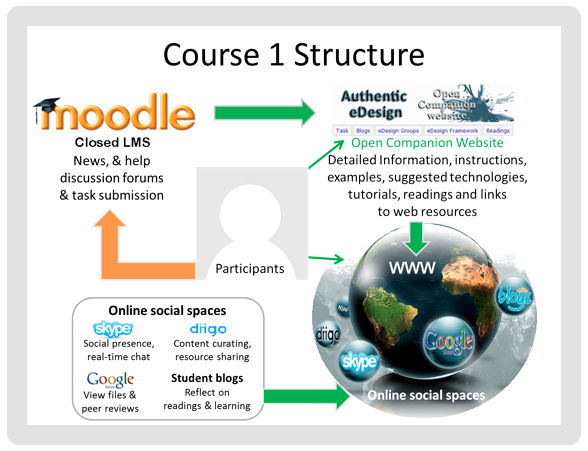

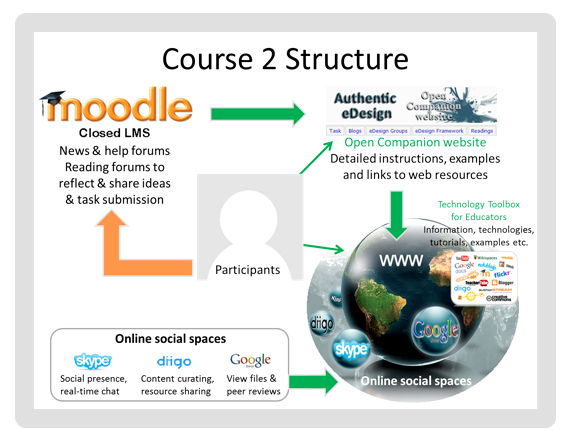

The course was implemented using a Moodle LMS and an open companion website created on Google Sites (see Figure 3). The LMS acted as the central hub for course announcements and provided a protected environment for the confidential components of the course. The companion website was the primary learning environment and contained detailed task instructions, course content, task and support resources. The primary reason for using an open companion website was to enable participants to access the course resources after the completion of the course.

Garrison and Archer's community of inquiry framework (2000, p. 88) was used to guide the selection of technologies to support teaching, cognitive and social presence in the online environment. "The premise for this framework is that higher-order learning is best supported in a community of learners engaged in critical reflection and discourse" (Garrison, Cleveland-Innes & Fung, 2010, p. 32), and that technologies are most effective when they are used by learners to construct, create and communicate their learning (Garrison & Akyol, 2009), which is consistent with a social constructivist learning approach, such as authentic learning.

Many educators believe learning should be open and social (Brown & Adler, 2008; Caswell, Henson, Jensen & Wiley, 2008; Cormier & Siemens, 2010; Downes, 2009; Murphy, 2012), and increasingly teachers and learners are showing a greater interest in social web technologies as they "can support self-governed, problem-based and collaborative activities in a better way" (Baltzersen, 2010). Social web technologies such as blogs, wikis, social media sharing and social networking sites provide ways for learners to express themselves and get to know their fellow learners, which can lead to a more comfortable and supportive learning environment. A Skype chat group and a Diigo bookmarking library group were created to encourage participants to engage in social and cognitive discourses. Google Docs was used as a collaborative space for students to share their website URL to facilitate peer reviews.

Figure 3: Authentic eDesign course structure

The course learning materials were primarily links to existing relevant and expert resources available on the web. There is a rapidly growing pool of open educational resources (OER) that educators can access, modify and reuse to support student learning. OER are materials used to support education that are licensed to allow free access and use by anyone in the world (Baltzersen, 2010; Curtin University, 2011). Using open access materials provided participants with a broader range of information, instead of a single textbook, and encouraged them to think critically and make decisions about the content they used. It also made it easier for them to access information and resources as there are less barriers (Cormier & Siemens, 2010; Harris, 2012). Links to web-based tools such as wikis, websites, blogs, videos and podcasts were included to assist participants explore how new technologies could be used as cognitive tools to support student learning. Participants were asked to create a blog on the open web where they could reflect on their learning throughout the course and encouraged to access each other's work to provide comments and feedback.

Fourteen higher education practitioners within three Western Australian universities registered for the course. Several people withdrew from the course before completing the Week 1 activities. All cited lack of time due to high workloads as the primary reason for withdrawing from the course: "We are a little under the pump at the moment, I am writing a whole new unit" (Participant AW, email). Six participants from two universities completed the course. According to Maor and Volet (2007), high dropout rates in online professional development courses are common, and attrition rates vary from as low as 13.5% to as high as 75%. Factors such as motivation, readiness to study, technical skills, and lack of time due to workloads, or family commitments are common barriers to completing online courses.

Despite their lack of time due to a variety of reasons, such as taking on a new role (Participant MA, email), running an intensive week teaching an MBA unit (Participant GS, email), and teaching an Open University Australia unit that was to run back to back with no breaks (Participant EC, email), it was nevertheless clear that the practitioners who withdrew were keen to learn about authentic pedagogies and new technologies, as many requested to be transferred to the second course scheduled to run in the following semester.

The course titled Authentic eDesign (see http://www.elearnopen.info/courses) was developed to test the effectiveness of an authentic learning approach supported by new technologies and open access to a vast array of resources on the Internet. The aim of the course was to assist educators to learn how to create more interactive and engaging online learning environments, specifically to:

The focus of this article is a preliminary analysis of the data collected from the anonymous online course evaluation survey conducted at the end of the first iteration of the course, and the facilitator reflections for improving the effectiveness of the framework for the second iteration. Five participants completed the online course evaluation questionnaire, which included 35 closed questions (using a four point scale, see Table 1 below) and two open short answer questions.

| Question | SA | A | D | SD |

| The course context represented the kind of setting where the skill or knowledge would be applied | 60% | 40% | ||

| The course environment provided a flexible pathway, where I was able to move around at will | 80% | 20% | ||

| The tasks mirrored the kind of activities performed in real-world applications | 100% | |||

| The task was presented as an overarching complex problem | 60% | 40% | ||

| The activities required significant investment of my time and intellectual resources | 80% | 20% | ||

| I was able to choose information from a variety of inputs, including relevant and irrelevant sources | 40% | 40% | 20% | |

| The tasks were ill-defined and open to multiple interpretations | 60% | 40% | ||

| The tasks afforded the opportunity to examine the problem from a variety of theoretical and practical | 20% | 80% | ||

| I was required to take on diverse roles across different domains of knowledge in order to complete the tasks | 20% | 60% | 20% | |

| Task assessment (evaluation) was seamlessly integrated with the major task in a manner that reflected real-world practices | 40% | 60% | ||

| The tasks allowed a range and diversity of outcomes open to multiple solutions of an original nature | 80% | 20% | ||

| The learning environment provided access to expert skill and opinion | 100% | |||

| The learning environment allowed access to other learners at various stages of expertise | 100% | |||

| I was able to hear and share stories about professional practice | 40% | 60% | ||

| I was able to explore issues from different viewpoints | 20% | 60% | 20% | |

| I was able to use the learning resources and materials for multiple purposes | 100% | |||

| I was provided with sufficient opportunities to collaborate (rather than simply cooperate) on tasks | 20% | 60% | 20% | |

| I was provided with sufficient opportunities to reflect on the course content and my own learning | 20% | 80% | ||

| I was required to make decisions about how to complete tasks | 100% | |||

| I was able to move freely in the environment and return to any element to act upon reflection | 80% | 20% | ||

| I was able to compare my thoughts and ideas to experts, teachers, guides and/or peers | 40% | 60% | ||

| I was able to work in collaborative groups that enabled discussion and social reflection | 20% | 60% | 20% | |

| The tasks required me to discuss and articulate my beliefs and growing understanding | 100% | |||

| The environment provided collaborative group spaces and forums that enabled articulation of ideas | 40% | 60% | ||

| The environment enabled more knowledgeable learners to assist with coaching | 40% | 60% | ||

| The facilitator provided contextual support and guidance 100% | ||||

| The facilitator provided timely and helpful feedback | 100% | |||

| The activities culminated in the creation of a polished product that would be acceptable in the workplace | 80% | 20% | ||

| The task enabled me to present my finished product (concepts and ideas) to a public audience | 60% | 40% | ||

| The activities allowed for multiple assessment measures | 60% | 40% | ||

| I felt comfortable learning in an open environment | 20% | 60% | 20% | |

| The technologies I was required to use in the course aided my learning | 60% | 40% | ||

| The recommended readings were useful for learning about the concepts covered in the course | 60% | 40% | ||

| The technologies used in the course demonstrated some of the ways these tools could be used to assist student learning | 80% | 20% | ||

| Overall I thought the course was a useful professional development opportunity | 80% | 20% |

The initial data analysis indicates practitioners responded positively to this innovative learning approach, as all participants agreed the course was a useful professional development opportunity. It is interesting to note that two of the participants did not think the tasks were ill-defined and open to multiple interpretations. Each participant produced a course outline tailored to their specific area of teaching and identified appropriate learning and assessment methods and supporting technologies. No two course outlines were the same, and participants identified a wide variety of methods and technologies which indicated the task was open to multiple interpretations. Perhaps they were suggesting that the task was not badly-defined, which is a common misinterpretation of this element.

In response to the first short answer question: What did you think were the strongest aspects of the course? one person responded "I was able to redevelop my unit plan and activities in my online unit as part of the course ready for semester one". Another commented on the flexibility of being able to control the pace of their learning "the online aspect of the unit allowed me to complete the tasks at my convenience". Access to new technologies was another positive aspect identified by a couple of participants: "the opportunity to develop my units with more consideration of how technology can support learning" and "appropriate technology choices".

Responses to the second short answer question: What areas do you think could be improved? identified a few areas for improvement. One person stated "the blogging was difficult as I struggled a bit with the purpose" and another advised "3 hours a week was nowhere near enough time to allocate". Participant workloads were also an issue "because I was so busy, I would have liked the course to have one less element to complete - I didn't complete the video (which I feel guilty about)". A constructive suggestion about the use of the Diigo, Skype and Google Docs technologies was offered by another participant "I wonder if these could have been introduced with a brief, specific activity that both familiarise us with the technology and demonstrated its usefulness to our learning".

The facilitator reflections confirmed that most learners struggled to complete the activities within the allocated time frame and that some participants had issues installing the necessary software on their work computers. They also thought a blog was not the best tool to use for participants to reflect on their learning and encourage discourse about the concepts covered in the readings, as the time required to setup and learn about blogging left little time for student reflection, due to the relatively short duration of the course.

| Elements | Issues | Recommendations |

| Authentic tasks | Time allocation insufficient. | Increase the time allocation and reduce content or simplify tasks (e.g. replace overview video with simple feedback screencast). Advise participants to install software prior to course commencement. |

| Task technologies (Skype and Diigo). | Skype - include reading and forum question about social presence. Diigo - encourage participants to comment on readings and add a resource to the Diigo group. | |

| Collaboration | No issues identified but limited collaboration required. | Include peer review of analysis worksheet. |

| Reflection | Pre and post course participant surveys. | Use a different tool so participants can refer back to their pre course survey. |

| Blogging purpose not clear and time consuming. | Replace blog with an easy to use tool (e.g., a forum for weekly reflections). |

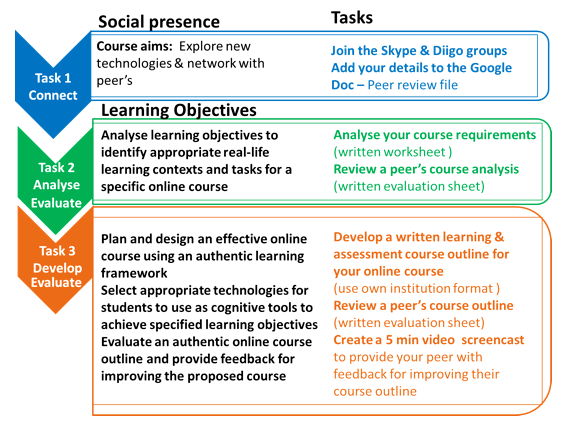

For the second iteration of the course, changes were made to the recommended pre-course preparation process. Instead of waiting until Week 1 to set up required technologies, participants were advised they would need to download and install the Skype and Diigo software before the commencement of the course. The learning objectives were the same as the first iteration; however, in Iteration 2, the task requirements were modified slightly, and participants were advised that they would need to dedicate more time to the course (4 hours per week). The 5-minute course overview video presentation was replaced with a screencast video for participants to provide constructive feedback to each other. Figure 4 below explains the relationship between the learning objectives and the course tasks.

Figure 4: Relationship between learning objectives and tasks - second iteration

The course structure was modified (see Figure 5) to include an open public wiki (Technology Toolbox for Educators) so that suggested technologies, pedagogies and tutorials did not have to be recreated on the companion website each time the course was implemented. Most of the content in the Moodle LMS was removed and added to either the open companion website or the Technology Toolbox for Educators wiki, and weekly reflection forums were added to the Moodle LMS to replace the blog activity.

Specific activities and information were included on the open companion website and the Moodle LMS to help participants learn about Diigo and Skype. The activities were designed to encourage participants to explore these social technologies and model how they could be used to support student learning. All readings were added to the Diigo library and links were provided on the Lectures & Reading page on the open companion website to redirect participants to the Diigo library. Participants were asked to share their understanding of the theoretical concepts covered in the readings by adding comments to the relevant resource in the Diigo group library. Short 10-minute weekly lectures about the concepts covered in the readings were also added to the Lectures & Readings page on the companion website. Social presence information was added to the eDesign Groups page on the companion website and a reading about using Skype to build social presence was added to the reading list. A question was also added to the Week 1 reflection forum on the Moodle LMS forum asking students to share their experience of how they had used Skype in their courses or to reflect on how Skype could be used in their future courses. In this way both theoretical and practical considerations based on participant feedback in the evaluation guided the re-design of the course for implementation with a new cohort of students.

Figure 5: Authentic eDesign course structure - second iteration

Modifications made to the second iteration of the course have the potential to resolve the issues regarding inadequate time allocation and the limited usefulness of the task technologies (specifically Skype and Diigo) that participants identified in the first iteration of the course. However, external factors such as participants' high workloads often result in low completion rates, which remains an increasingly prevalent and problematic issue for online learners. Participation in programs for improving online teaching practices is often voluntary, without institutional support, resulting in poor attendance in most courses. Consequently, the majority of attendees comprise staff who are genuinely interested in online learning and are willing to sacrifice their own time and resources to achieve a learning goal (Weaver, Robbie & Borland, 2008).

If universities wish to improve the quality of existing online courses, they perhaps need to investigate ways to support and encourage educators to attend and complete professional development activities. Nevertheless, this research indicates that an authentic approach appears to provide a useful and engaging theoretical design framework for participants who are able to commit personally and practically to online learning.

Baltzersen, R. K. (2010). Radical transparency: Open access as a key concept wiki pedagogy. Australasian Journal of Educational Technology, 26(6), 791-809. http://www.ascilite.org.au/ajet/ajet26/baltzersen.html

Brown, J. S., & Adler, R. p. (2008). Minds on fire: Open Education, the long tail and learning 2.0. Educause Review Online, 43(1), 16-32. http://net.educause.edu/ir/library/pdf/ERM0811.pdf

Caswell, T., Henson, S., Jensen, M. & Wiley, D. (2008). Open educational resources: Enabling universal education. International Review of Research in Open and Distance Learning. http://www.irrodl.org/index.php/irrodl/article/view/469/1001

Collins, A. & Halverson, R. (2009). Rethinking education in the age of digital technology. New York: Teachers College Press.

Cormier, D. & Siemens, G. (2010). Through the open door: Open courses as research, learning and engagement. EDUCAUSE Review, 45(4), 30-39. http://net.educause.edu/ir/library/pdf/ERM1042.pdf

Curtin University (2011). Issue 26 - Open Educational Resources (OER). http://blogs.curtin.edu.au/cel/741/newsletter26

Darabi, A., Arrastia, M. C., Nelson, D. W., Cornille, T. & Liang, X. (2010). Cognitive presence in asynchronous online learning: A comparison of four discussion strategies. Journal of Computer Assisted Learning, 27(3), 216-227. http://dx.doi.org/210.1111/j.1365-2729.2010.00392.x

Downes, S. (2009). Downes-Wiley: A conversation on open educational resources. http://www.downes.ca/files/Downes-Wiley.pdf

Garrison, D. R. & Akyol, Z. (2009). Role of instructional technology in the transformation of higher education. Journal of Computing in Higher Education, 21(1), 19-30. http://dx/doi.org/10.1007/s12528-009-9014-7

Garrison, D. R., Anderson, T. & Archer, W. (2000). Critical Inquiry in a text based environment: Computer referencing in higher education. The Internet and Higher Education, 2(2-3), 87-105. http://dx.doi.org/110.1016/S1096-7516(1000)00016-00016

Garrison, D. R., Cleveland-Innes, M. & Fung, T. S. (2010). Exploring causal relationships among teaching, cognitive and social presence: Student perceptions of the community of inquiry framework. The Internet and Higher Education, 3(1-2), 31-36. http://dx.doi.org/10.1016/j.iheduc.2009.10.002

Gravemeijer, K. & Cobb, P. (2006). Design research from a learning design perspective. In J. van den Akker, K. Gravemeijer, S. McKenney & N. Nieveen (Eds.), Educational design research. Abingdon, Oxon: Routledge.

Harris, S. (2012). Moving towards an open access future: The role of academic libraries. SAGE and British Library. http://www.uk.sagepub.com/repository/binaries/pdf/Library-OAReport.pdf

Herrington, J., Reeves, T. C. & Oliver, R. (2010). A guide to authentic e-learning. New York: Routledge.

Hodges, C. B. & Repman, J. (2011). Moving outside the LMS: Matching Web 2.0 tools to instructional purpose. EDUCAUSE Learning Initiative, September. http://net.educause.edu/ir/library/pdf/ELIB1103.pdf

Kelly, A. E. (2006). Quality criteria for design research. In J. van den Akker (Ed.), Educational design research (pp. 107-118). Abingdon, Oxon: Routledge.

Kim, B. & Reeves, T. C. (2007). Reframing research on learning with technology: In search of the meaning of cognitive tools. Instructional Science, 35(3), 207-256. http://dx.doi.org/10.1007/s11251-006-9005-2

Lambert, J. & Cuper, P. (2008). Multimedia technologies and familiar spaces: 21st-century teaching for 21st-century learners. Contemporary Issues in Technology and Teacher Education, 8(3), 264-276. http://www.citejournal.org/vol268/iss263/currentpractice/article261.cfm

Lane, L. M. (2008). Toolbox or trap? Course management systems and pedagogy. EDUCAUSE Quarterly, 31(2), 4-6. http://net.educause.edu/ir/library/pdf/eqm0820.pdf

Levin-Goldberg, J. (2012). Teaching generation techX with the 4Cs: Using technology to integrate 21st century skills. Journal of Instructional Research, 1(1), 56-66. http://cirt.gcu.edu/jir/documents/2012-v2011/goldbergpdf

Lombardi, M. M. (2007). Authentic learning for the 21st Century: An overview. EDUCAUSE Learning Initiative White Papers. http://net.educause.edu/ir/library/pdf/eli3009.pdf

Maor, D. (1999). A teacher professional development program on using a constructivist multimedia learning environment. Learning Environments Research, 2(3), 307-330. http://dx.doi.org/310.1023/A:1009915305353

Maor, D. (2003). Teacher's and students' perspectives on on-line learning in a social constructivist learning environment. Technology, Pedagogy and Education, 12(2), 201-218. http://dx.doi.org/210.1080/14759390300200154

Maor, D. (2007). The cognitive and social processes of university students' online learning. In ICT: Providing choices for learners and learning. Proceedings ascilite Singapore 2007. http://www.ascilite.org.au/conferences/singapore07/procs/maor.pdf

Maor, D. & Volet, S. (2007). Engagement in professional online learning: A situative analysis of media professionals who did not make it. International Journal on E-learning, 6(1), 95-117. http://www.editlib.org/p/6203

MoodleDocs (2010). Pedagogy 2.5. http://docs.moodle.org/en/Pedagogy

Murphy, J. (2012). LMS teaching versus community learning: A call for the latter. Asia Pacific Journal of Marketing and Logistics, 24(5), 826-841. http://dx.doi.org/10.1108/13555851211278529

Oliver, R. (2005). Ten more years of educational technologies in education: How far have we travelled? Australian Educational Computing, 20(1), 18-23. http://acce.edu.au/sites/acce.edu.au/files/pj/journal/AEC%20Vol%20

20%20No%201%202005%20Ten%20more%20years%20of%20educational%20technolog.pdf

Parker, J. (2011). A design-based research approach for creating effective online higher education courses. WAIER Research Forum August 2011, University of Notre Dame Fremantle. http://www.waier.org.au/forums/2011/abstracts.html#parker

Reeves, T. C. (2006). Design research from a technology perspective. In J. van den Akker (Ed.), Design methodology and developmental research in education and training. The Netherlands: Kluwer.

Rotherham, A. J. & Willingham, D. (2009). 21st century skills: The challenges ahead. Educational Leadership, 67(1), 16-21. http://www.ascd.org/publications/educational-leadership/sept09/vol67/num01/21st-Century-Skills@-The-Challenges-Ahead.aspx21st-Century-Skills@-The-Challenges-Ahead.aspx

Weaver, D., Robbie, D. & Borland, R. (2008). The practitioner's model: Designing a professional development program for online teaching. International Journal on E-Learning, 7(4), 759-774. http://www.editlib.org/p/24411

The articles in this Special issue, Teaching and learning in higher education: Western Australia's TL Forum, were invited from the peer-reviewed full papers accepted for the Forum, and were subjected to a further peer review process conducted by the Editorial Subcommittee for the Special issue. Authors accepted for the Special issue were given options to make minor or major revisions (minor additions in the case of Parker, Maor and Herrington). The reference for the Forum version of their article is:

Parker, J., Maor, D. & Herrington, J. (2013). Under the hood: How an authentic online course was designed, delivered and evaluated. In Design, develop, evaluate: The core of the learning environment. Proceedings of the 22nd Annual Teaching Learning Forum, 7-8 February 2013. Perth: Murdoch University. http://ctl.curtin.edu.au/professional_development/conferences/tlf/tlf2013/refereed/parker.htmlAuthors: Jenni Parker is a lecturer in the School of Education at Murdoch University in Perth, Western Australia. She teaches in the educational technology stream in the School of Education, and her main areas of research are authentic e-learning, new technologies and open educational resources. Email: j.parker@murdoch.edu.au Web: http://www.elearnopen.info/ Dorit Maor is a senior lecturer at the School of Education, Murdoch University. She has over two decades of research and teaching in eLearning, in particular the integration of innovative pedagogies with new technologies. She is the recipient of a 2012 Australian Award for University Teaching from the OLT for outstanding contributions to student learning. Email: d.maor@murdoch.edu.au Web: http://profiles.murdoch.edu.au/myprofile/dorit-maor/ Jan Herrington is a Professor of Education at Murdoch University. She teaches in the educational technology stream in the School of Education, and her main areas of research are authentic e-learning, mobile learning and design-based research [http://authenticlearning.info//] Email: j.herrington@murdoch.edu.au Web: http://profiles.murdoch.edu.au/myprofile/jan-herrington/ Please cite as: Parker, J., Maor, D. & Herrington, J. (2013). Authentic online learning: Aligning learner needs, pedagogy and technology. In Special issue: Teaching and learning in higher education: Western Australia's TL Forum. Issues in Educational Research, 23(2), 227-241. http://www.iier.org.au/iier23/parker.html |