|

A perspective on supporting STEM academics with blended learning at an Australian university

Rachael Hains-Wesson

Swinburne University of Technology, Australia

Russell Tytler

Deakin University, Australia

Design-based educational research can aid in providing a lens into understanding the complexities around imaginative methods, while also creating an avenue to share personal insights to support the solving of teaching and learning problems to direct future efforts. In this study, the 'I' narrative was extensively utilised in the form of an autoethnography perspective. This was achieved by incorporating three self-report methods within a design experiment, in order to explore the messiness associated with showcasing the creation and modification of a faculty-wide blended learning framework for STEM teachers. Data generation procedures from three sources provided the evidential basis for investigating this process: (1) self-reflection, (2) key literature findings, and (3) critical discussions from a community of inquiry. The findings identified three particular features of the process of change that were challenging, for which STEM academics required support: educators' professional context; finding models to support change in practice; and identifying the change agent. The paper argues for the program of a personal and complex methodology to inform practice, providing insights into the change process, because process is just as important as product.

The rapid growth in online teaching across the Australian university sector (Fletcher & Bullock, 2015) and the requirement to enhance the delivery of all STEM subjects sees blended learning as a positive option for such improvement (Marginson, Tytler, Freeman & Roberts, 2013; Picciano, 2009; Torrisi-Steele, 2011). For more than a decade, blended learning was judged as "all pervasive in the training industry" (Reay, 2001, p. 6). The members of the American Society for Training and Development have also argued that blended learning was one of the top emerging trends in the knowledge delivery industry (Finn, 2002). However, the term blended learning can mean different things to many people. Torrisi-Steele's (2011) definition states, "blended learning aims to enrich student-centred learning experiences made possible by the harmonious integration of various strategies, achieved by combining f2f [face-to-face] interaction with ICT [information communications technology]" (p. 366). This is a useful definition, because of the emphasis on the "harmonious integration" of ICT into the face-to-face learning environment. Torrisi-Steele's (2011) work also suggests three dimensions for creating an effective blended learning model:

Throughout my time as a blended learning specialist, I was continually reflecting on self, other, and context, and began to value the recognition of myself as a subject of the research (Dyson, 2007), and a researcher within the research. For example, I found that one of the main challenges for STEM academics was the dichotomy between the values associated with research output, versus the importance of implementing instructional change. On the one hand, to assist academics to improve instruction, Deakin University's LIVE 2020 Agenda (2014a) advocated for every faculty to complete a university-wide Course Enhancement Process by 2016 (Deakin University, 2014b). The program aimed to harness the capabilities of blended learning that used technology for the purpose of student engagement. It was a university-wide collaborative effort between faculty members in each school, and focused on enabling graduates to become highly employable through course experiences that were personal, relevant and engaging wherever learning took place (on campus, in the cloud, or in industry settings). On the other hand, the faculty's research culture encouraged STEM teachers to pursue discipline-specific research and funding outputs for promotion and tenure. The opposing interests did not sit side-by-side so easily.

Further, SEBE's organisational structure was such that it was common for teaching and learning projects to operate within a top-down decision making culture - an organisational system that commonly operates within the higher education sector, especially when technological-driven initiatives are being implemented at a university-wide level (Hains-Wesson, Wakeling & Aldred, 2014). Usually, this type of operational style is implemented due to limited finances, resources and time. As an alternative, Slade, Murfin & Readman (2013) advocated a middle-out approach, where mid-year career academics, professional staff and learning designers work together (as teaching and learning champions) to showcase good practice in order to influence instructional change over a longer period of time. However, this particular style was not always achievable in SEBE's context of operation, because of the need for urgent results via the university-wide Course Enhancement Process.

In a similar study (Hains-Wesson, et al, 2014) at a different university, my colleagues and I explored the challenges of operating within a top-down decision making model, which was similar to SEBE's. We discovered that functioning effectively as academic support staff, while being responsible for strategic initiatives within a multi-faceted system (Hains-Wesson et al, 2014, p. 156), presented pedagogical uncertainties, a circumstance demanding further investigation.

Therefore, this study, first describes and shares (via the 'I' experience) my practitioner's journey as the blended learning framework was being developed and modified within SEBE's teaching and learning environment. Second, I interpret and analyse personal learnings, understandings and experiences around the pre-, during and post-design of the model in order to share my insights with those who operate in similar contexts (Reeves, et al, 2011; Wozniak, et al, 2012). The following research questions guided this qualitative, design-based inquiry.

A further complicating factor was that STEM academics were implementing blended learning at differing levels already, and had different learning and teaching needs. I chose a design experiment that incorporated self-report methods because the methods were open, involved reflection that was based on a personal venture (Mooney, 1957), and were cyclic in nature while bridging theory with practice (Dyson, 2007; Opie, 2004). Design experiment and self-report methods allow teachers to be researchers and observers (Lincoln & Denzin, 1994; Lincoln & Guba, 1985), to directly contribute to their own self-realisation (Mooney, 1957). This is for the reason articulated by Bakker (2004), "if you want to understand something you have to change it, and if you want to change something you have to understand it" (p. 37). However, Zeichner and Noffke (2001) cautioned that researchers who use such perspectives and methods might become limited by their preconceptions, because it is difficult to avoid bias towards self-validation. However, others have acknowledged that such research thinking and practices strengthen educational discourse, and support change in curriculum and pedagogy that can improve the quality of students' learning experiences (Barab & Squire, 2004; Ruthven, 2005).

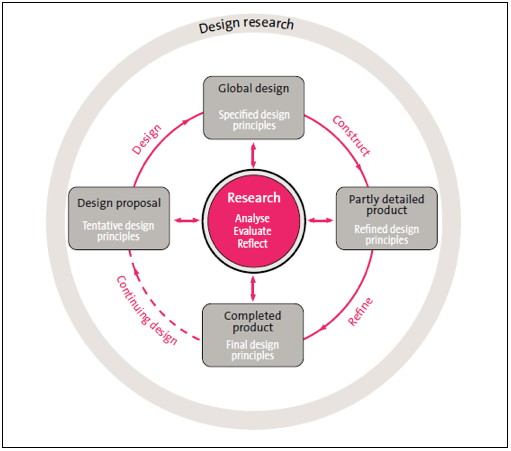

Figure 1: The design experiment cycles as suggested by Nieveen & Folmer (2013, p. 159)

The self-report method also helped me to understand the internal 'going-ons' within the design experiment, which were often messy in nature. A highly useful way of documenting and exploring the 'messiness' that was associated with such a complex methodology, namely reflection on self, other, and context around organic moments of discovery, which occurred in the present, continuously and/or retrospectively (see Table 1). Additionally, this process enabled me to navigate effectively and focus on personal understandings and those of my colleagues who operated within a top-down decision making culture. This in turn, helped me to create alternative avenues in order to work effectively with STEM academics who were often at the coal face for change, relying on initiatives and support to occur at the macro level. Table 1 illustrates how the key reflective strategies within the self-report method corresponded to the design experiment's cycle phases in terms of its inner, middle and outer circles (see Figure 1). To help explain this further, I have numbered (1-6) the key 'I' narratives as reflective strategies alongside the inner, middle and outer circles that are associated with the design experiment phases, which are depicted in Figure 1.

Hamilton and Pinnegar (1998) pointed out that self-report studies which use a similar process, "involve a thoughtful look at texts read, experiences had, people known and ideas considered" (p. 236). In the case of this study, this included "note-taking, memory work, narrative writing, observation and interview" (Hamilton, Smith & Worthington, 2008, p. 22), and formed the main data generation processes. Essentially, the methods allowed for a systematic approach to data collection, analysis, and interpretation about self, other and context (Ngunjiri, et al, 2010), which aided in developing and/or modifying the blended learning model. I discuss each data generation process in more detail in the following section.

| No. | Key reflective strategies according to Fletcher and Bullock (2015) | No. | Key design experiment stages according to Nieveen & Folmer (2013) |

| 1 | Being aware of my self-focused perspective | 1 | Outer-circle: Design research |

| 2 | Improving my understanding of self and my practice | 2 | Outer-circle: Design research |

| 3 | Reflections | 3 | Inner-circle: Research - analyse, evaluate, reflect |

| 4 | Literature findings | 4 | Inner-circle: Research - analyse, evaluate, reflect |

| 5 | Community of inquiry: critically informed discussions and feedback | 5 | Mid-circle: Construct, refine, continuing design, and design |

| 6 | Reflections, community of inquiry and literature findings | 6 | Mid-circle: specified design principles, refined design principles, final design principles, tentative design principles |

| Self-report data generation (phase 1 and 2) | Theme | Turning point |

Self-reflections:

|

My understanding of STEM educators' learning styles and the pressures they face - a variety of ways to provide buy-in for instructional change | STEM educators desire professional development that is discipline-specific and easy to implement so that they can spend time on their research |

Self-reflections:

|

The need to work in ways consistent with STEM educators' learning styles - using online models are about STEM educator's learning styles and how they will influence the delivery of professional development, resources and/or models | Finding new ways to present blended learning information that suits the teaching and learning culture for STEM educators |

Self-reflections:

|

Developing my professional identity as an individual, a team member and researcher in the area of blended learning | The development of the blended learning model will be influenced by my teacher's identity in terms of leadership, skills, and self-efficacy |

For example, a simple approach to an educator's learning and teaching requirement, such as placing a rubric online, allowed for doors to be opened for later supportive opportunities, "he/she does not necessarily want to chat with me [about blended learning]É just the basic stuff like setting up a rubric" (see Table 2). At times, STEM academics required help, but only after they had purposely tried-out innovations themselves, and away from me. Once this pattern had repeated itself a few times with good results, I found that academics often dropped-in for 'just-in-time' support and/or phoned, emailed or made an appointment to see me face-to-face, such as: "thanks for the discussion today about using video for student feedback. I will get back to you if I have any trouble" (field note entry, 2015). When an academic felt confident to discuss the faculty's approach to blended learning, the conversations lasted for some time, were robust, informative and a two-way problem solving event. Additionally, the community of inquiry often talked about problematic conversations that had occurred with particular staff who were struggling with making changes in their teaching practice and/or course curriculum design work. Advice was readily shared between members of the community of inquiry in order to boost self and team confidence, such as "don't give up - it's just better not to wait, that's all" (see Table 2).

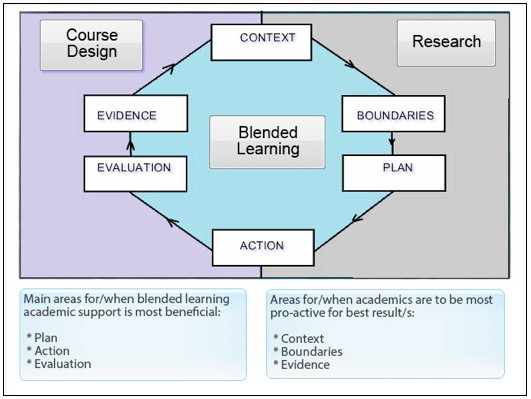

This led to providing the blended learning framework via an online format so that academics could access it anywhere and at any time. Figure 3 shows the blended learning model as an artefact (see https://youtu.be/2YGkfBSfO58 for more detail). There were three main reasons for presenting additional information pertaining to the blended learning framework via an open access resource such as YouTube. First, it allowed academics to view and explore the resource in their own time, and as long as they had Internet access, because instruction is best designed to meet the needs of a variety of learners (Picciano, 2009). Second, I found that it was important to realise that it was safe to employ online professional development strategies when options were limited (Herold, 2013).

Figure 2: Blended learning model for STEM for improving practice and instructional change

(see https://youtu.be/2YGkfBSfO58 for a full explanation)

I came to understand my practitioner's identity in a STEM-centric faculty as both positive and negative. For example, I found myself trying to refine a process while working with others and receiving external (feedback) information that then required a further refinement of the model. In many ways, I was struggling with knowing how instructional change works as a general concept versus a STEM-centric way of doing things. As a consequence, I was ascertaining a new way of working where I felt torn between supporting academics at a course level versus supporting the individual without extensive knowledge of STEM subjects, because the majority of my opportunities to support STEM academics were at a one-on-one and/or just-in-time support level. Sunal et al. (2001), advocated that faculty members are interested in just-in-time approaches, because these are more personal, noting that change is always difficult. As I became more confident in this area, "it is your thing. Can you do this more" (see Table 2), I felt I had finally turned a corner. I had something worth offering staff compared to my original perspective, which was based on intuition, personal perceptions and feeling stuck when operating within a top-down decision making culture.

Stronger forms of collaboration are important in developmental research aiming to define good practice in teaching and learning because of the centrality of practitioner knowledge and thinking in realising such practice. (p. 424).The personal 'I' story of an academic support person's experiences around artefact making and process is important, because it highlights the difficulties around pedagogical change in higher education institutions, and the importance of this work for academic support professionals, who often work for and within top-down decision making cultures of operation. I have critically challenged myself in terms of verifying or contradicting intuitions and/or personal bias. I have in turn discovered a number of key learning issues:

Bakker, A. (2004). Design research in statistics education: On symbolizing and computer tools. Utrecht, The Netherlands: CD-Beta Press.

Barab, S. & Squire, K. (2004) Design-based research: Putting a stake in the ground. Journal of the Learning Sciences, 13(1), 1-14. http://dx.doi.org/10.1207/s15327809jls1301_1

Boud, D. J. (1986). Implementing student self assessment. Higher Education Research and Development Society of Australia Green Guide No. 5. Sydney: Higher Education Research and Development Society of Australia.

Bullock, S. M. & Ritter, J. K. (2011). Exploring the transition into academia through collaborative self-study. Studying Teacher Education, 7(2), 171-181. http://dx.doi.org/10.1080/17425964.2011.591173

Cohen, D. (1988). Teaching practice: Plus a change. (Issue paper No. 88-3). East Lansing, MI: Michigan State University, The National Center for Research on Teacher Education.

Collins, A., Joseph, D. & Bielaczyc, K. (2004). Design research: Theoretical and methodological issues. Journal of the Learning Sciences, 13(1), 15-42.

Deakin University (2014a). LIVE the Future AGENDA 2020, Course Enhancement Guidelines, 2014. http://www.deakin.edu.au/__data/assets/pdf_file/0003/224193/Course-enhancement-guidelines-2014.pdf

Deakin University (2014b). Deakin Curriculum Framework. http://www.deakin.edu.au/learning/deakin-curriculum-framework

Dobson, I. A. (2014). Staffing university science in the twenty-first century. AustralianCouncil of Deans of Science Victoria: Educational Policy Institute Pty Ltd. http://www.acds.edu.au/wp-content/uploads/sites/6/2015/05/ACDS-Science-Staffing-2014_August_Final.pdf

Dyson, M. (2007). My story in a profession of stories: Auto ethnography - an empowering methodology for educators. Australian Journal of Teacher Education, 32(1). http://dx.doi.org/10.14221/ajte.2007v32n1.3

Fairweather, J. (2008). Linking evidence and promising practices in science, technology, engineering, and mathematics (STEM) undergraduate education. The National Academies National Research Council Board of Science Education. http://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_072637.pdf

Fairweather, J. S. & Paulson, K. (2008). The evolution of American scientific fields: Disciplinary differences versus institutional isomorphism. In Cultural Perspectives on Higher Education, pp. 197-212. Springer Netherlands, 2008. http://www.springer.com/us/book/9781402066030

Finn, A. (2002). Trends in e-learning. Learning Circuits, 3. [viewed at http://www.learningcircuits.org/2002/nov2002/finn.htm; not found 14 Nov 2015, see http://www.cedma-europe.org/newsletter%20articles/TrainingZONE/Trends%20in%20e-Learning%20(26%20Jul%2004).pdf]

Fletcher, T. & Bullock, S. M. (2015). Reframing pedagogy while teaching about teaching online: A collaborative self-study. Professional Development in Education, 41(4), 690-706. http://dx.doi.org/10.1080/19415257.2014.938357

Garet, M. S. Porter, A. C., Desimone, L., Birman, B. F. & Yoon, K. S. (2001). What makes professional development effective? Results from a national sample of teachers. American Educational Research Journal, 38(4), 915-945. http://dx.doi.org/10.3102/00028312038004915

Guitart, D., Pickering, C. & Byrne, J. (2012). Past results and future directions in urban community gardens research. Urban Forestry & Urban Greening, 11(4), 364-373. http://dx.doi.org/10.1016/j.ufug.2012.06.007

Hains-Wesson, R. (2013). Why do you write? Creative writing and the reflective teacher. Higher Education Research & Development, 32(2), 328-331. http://dx.doi.org/10.1080/07294360.2013.770434

Hains-Wesson, R., Wakeling, L. & Aldred, P. (2014). A university-wide ePortfolio initiative at Federation University Australia: Software analysis, test-to-production, and evaluation phases. International Journal of ePortfolios, 4(2), 143-156. http://www.theijep.com/pdf/IJEP147.pdf

Hall, A. & Herrington, J. (2010). The development of social presence in online Arabic learning communities. Australasian Journal of Educational Technology, 26(7), 1012-1027. http://ajet.org.au/index.php/AJET/article/view/1031/292

Hamilton, M. L., Smith, L. & Worthington, K. (2008). Fitting the methodology with the research: An exploration of narrative, self-study and auto-ethnography. Studying Teacher Education, 4(1), 17-28. http://dx.doi.org/10.1080/17425960801976321

Hamilton, M. L. & Pinnegar, S. (1998). Conclusion: The value and the promise of self-study. In M. L. Hamilton (Ed.), Reconceptualising teaching practice: Self-study in teaching education (pp. 235-246). London: Falmer Press.

Herold, B. (2013). Benefits of online, face-to-face professional development similar, study finds. Education Week - Digital Education, 20 June. http://blogs.edweek.org/edweek/DigitalEducation/2013/06/no_difference_between_online_a.html

Hughes, S., Pennington, J. L. & Makris, S. (2012). Translating autoethnography across the AERA standards: Toward understanding autoethnographic scholarship as empirical research. Educational Researcher, 41(6), 209-219. http://dx.doi.org/10.3102/0013189X12442983

Jayarajah, K., Saat, M. R. & Rauf, A. A. (2014). A review of science, technology, engineering and mathematics (STEM) education research from 1999-2013: A Malaysian perspective. Eurasia Journal of Mathematics, Science and Technology Education, 10(3), 155-163. http://dx.doi.org/10.12973/eurasia.2014.1072a

Lefoe, G., Philip, R., O'Reilly, M. & Parrish, D. (2009). Sharing quality resources for teaching and learning: A peer review model for the ALTC Exchange in Australia. Australasian Journal of Educational Technology, 25(1), 45-59. http://ajet.org.au/index.php/AJET/article/view/1180/408

Lincoln, Y. S. & Denzin, N. K. (1994). The fifth moment. In N. K. Denzin & Y. S. Lincoln (Eds.), Handbook of qualitative research (pp. 575-586). Thousand Oaks, CA: SAGE.

Lincoln, Y. S. & Guba, E. G. (1985). Naturalistic inquiry. Newbury Park: SAGE.

Marginson, S., Tytler, R., Freeman, B. & Roberts, K. (2013). STEM: Country comparisons, International comparisons of science, technology, engineering and mathematics (STEM) education. Australian Council of Learned Academies. http://www.acola.org.au/index.php/projects/securing-australia-s-future/project-2

Merga, M. (2015). Thesis by publication in education: An autoethnographic perspective for educational researchers. Issues in Educational Research, 25(3), 291-308. http://www.iier.org.au/iier25/merga.html

Mooney, R. L. (1957). The researcher himself. In Research for curriculum improvement. Association for Supervision and Curriculum Development, 1957 yearbook (pp. 154-186). Washington, DC: Association for Supervision and Curriculum Development.

Ngunjiri, F. W., Hernandez, K. C. & Chang, H. (2010). Editorial: Living autoethnography: Connecting life and research. Journal of Research Practice, 6(1), Article E1. http://jrp.icaap.org/index.php/jrp/article/view/241/186

Nicholl, H. & Higgins, A. (2004). Reflection in preregistration nursing curricula. Journal of Advanced Nursing, 46(6), 578-585. http://dx.doi.org/10.1111/j.1365-2648.2004.03048.x

Nieveen, N. & Folmer, E. (2013). Formative evaluation in educational design research. In T. Plomp & N. Nieveen (Eds.), Educational design research - part A: An introduction (pp. 152-169). Enschede, the Netherlands: SLO.

Office of the Chief Scientist (2014). Science, technology, engineering and mathematics: Australia's future. Australian Government, Canberra. http://www.chiefscientist.gov.au/wp-content/uploads/STEM_AustraliasFuture_Sept2014_Web.pdf

Office of the Chief Scientist (2015). STEM skills in the workforce: What do employers want? Australian Government, Canberra. http://www.chiefscientist.gov.au/2015/04/occasional-paper-stem-skills-in-the-workforce-what-do-employers-want/

Opie, C. (2004). Doing educational research. USA: SAGE.

Penuel, W. R., Fishman, B. J., Yamaguchi, R. & Gallagher, L. P. (2007). What makes professional development effective? Strategies that foster curriculum implementation. American Education Research Journal, 44(4), 921-958. http://dx.doi.org/10.3102/0002831207308221

Picciano, A. G. (2009). Blending with purpose: The multimodal model. Journal of the Research Center for Educational Technology (RCET), 5(1), 4-14. http://www.rcetj.org/index.php/rcetj/article/viewFile/11/14

Pickering, C. & Byrne, J. (2014). The benefits of publishing systematic quantitative literature reviews for PhD candidates and other early-career researchers. Higher Education Research & Development, 33(3), 534-548. http://dx.doi.org/10.1080/07294360.2013.841651

Prinsley, R. & Baranyai, K. (2015). STEM skills in the workforce: What do employers want? Occasional Papers, Issue 9, Office of the Chief Scientist, Australia. http://www.chiefscientist.gov.au/wp-content/uploads/OPS09_02Mar2015_Web.pdf

Reay, J. (2001). Blended learning - a fusion for the future. Knowledge Management Review, 4(3), 6. [viewed at http://www.melcrum.com/products/journals/kmr.shtml; not found 14 November 2015]

Reeves, T. C., McKenney, S. & Herrington, J. (2011). Publishing and perishing: The critical importance of educational design research. Australiasian Journal of Educational Technology, 27(1), 55-65. http://ajet.org.au/index.php/AJET/article/view/982/255

Roy, S., Byrne, J. & Pickering, C. (2012). A systematic quantitative review of urban tree benefits, costs, and assessment methods across cities in different climatic zones. Urban Forestry & Urban Greening, 11(4), 351-363. http://dx.doi.org/10.1016/j.ufug.2012.06.006

Ruthven, K. (2005). Improving the development and warranting of good practice in teaching. Cambridge Journal of Education, 35(3), 407-426. http://dx.doi.org/10.1080/03057640500319081

Schön, D. (1983). The reflective practitioner. New York: Basic Books.

Slade, C., Murfin, K. & Readman, K. (2013). Evaluating processes and platforms for potential ePortfolio use: The role of the middle agent. International Journal of ePortfolio, 3(2), 177-188. http://www.theijep.com/pdf/IJEP114.pdf

Starr, L. J. (2010). The use of autoethnography in educational research: Locating who we are in what we do. Canadian Journal for New Scholars in Education, 3(1) June 2010. http://cjnse.journalhosting.ucalgary.ca/ojs2/index.php/cjnse/article/viewFile/149/112

Steven, R., Pickering, C. & Castley, J. G. (2011). A review of the impacts of nature based recreation on birds. Journal of Environmental Management, 92(10), 2287-2294. http://dx.doi.org/10.1016/j.jenvman.2011.05.005

Student Evaluation of Teaching and Units (SETU) (2013). Summary report for Trimester 3. Deakin University. https://www.deakin.edu.au/planning-unit/surveys/public/setu-report-tri3-12.pdf

Sunal, D. W., Hodges, J., Sunal, C. S., Whitaker, K. W., Freeman, L. M., Edwards, L., Johnston, R. A. & Odell, M. (2001). Teaching science in higher education: Faculty professional development and barriers to change. School Science and Mathematics, 101(5), 246-257. http://dx.doi.org/10.1111/j.1949-8594.2001.tb18027.x

The Australian Industry Group (2015). Progressing STEM skills in Australia. http://www.aigroup.com.au/portal/binary/com.epicentric.contentmanagement.servlet.ContentDelivery

Servlet/LIVE_CONTENT/Publications/Reports/2015/14571_STEM%20Skills%20Report%20Final%20-.pdf

The White House (2009). Press release: President Obama launches 'educate to innovate' campaign for excellence in science, technology, engineering and mathematics (STEM) education. http://www.whitehouse.gov/the-press-office/president-obama-launches-educate-innovate-campaign-excellence-science-technology-en

Torrisi-Steele, G. (2011). This thing called blended learning - a definition and planning approach. In Research and Development in Higher Education: Reshaping Higher Education, 34, 360-371 (Proceedings HERDSA 2011). http://www.herdsa.org.au/wp-content/uploads/conference/2011/papers/HERDSA_2011_Torrisi-Steele.PDF

University Experience Survey National Report (2014). Australian Government, Department of Education and Training. https://education.gov.au/university-experience-survey

Walter, M. M. (Ed.) (2011). Social research methods. Australia: Oxford University Press.

Watson, J. (2008). Blended learning: The convergence of online and face-to-face education. Vienna, VA: North American Council for Online Learning. http://files.eric.ed.gov/fulltext/ED509636.pdf

Wilson, S. M. (2013). Professional development for science teachers. Science, 340(6130), 310-313. http://dx.doi.org/10.1126/science.1230725

Wozniak, H., Pizzica, J. & Mahony, M. J. (2012). Design-based research principles for student orientation to online study: Capturing the lessons learnt. Australasian Journal of Educational Technology, 28(5), 896-911. http://ajet.org.au/index.php/AJET/article/download/823/120

Yarker, M. B. & Park, S. (2012). Analysis of teaching resources for implementing an interdisciplinary approach in the K-12 classroom. Eurasia Journal of Mathematics, Science and Technology Education, 8(4), 223-232. http://www.ejmste.com/v8n4/eurasia_v8n4_yarker.pdf

Zeichner, J. & Noffke, S. (2001). Practitioner research. In V. Richardson (Ed.), Handbook of research on teaching (4th edn). Washington, DC: American Educational Research Association, 298-330.

Zellermayer, M. & Tabak, E. (2006). Knowledge construction in a teachers' community of enquiry: A possible road map. Teachers and Teaching: Theory and practice, 12(1), 33-49. http://dx.doi.org/10.1080/13450600500364562